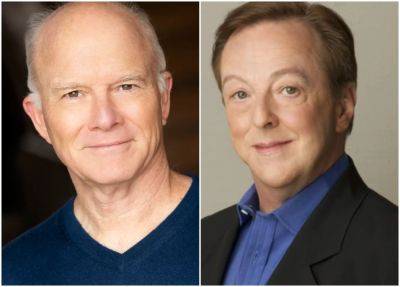

Michael Schneider Variety Editor at Large Two more “Frasier” alums are making a comeback: Dan Butler, who played Bulldog — the sports host at Frasier’s radio station on the original series — will visit the “Frasier” revival’s Season 2 on Paramount+, as will Edward Hibbert, who played Gil Chesterton on the O.G. show.

Artists Urge Action on AI, but Congress Is Slow to Respond

22.05.2024 - 18:37 / variety.com

Gene Maddaus Senior Media Writer Scarlett Johansson called out OpenAI this week for mimicking her voice for a new chatbot, underscoring the urgency that many artists feel about regulating artificial intelligence. But in Congress, taking on AI is starting to resemble wrestling an octopus. It’s so sprawling, it’s hard to know how to begin.

The Senate AI Working Group issued a “roadmap” last week. But that left it vague where Congress is going or when it will get there. Some have warned against stifling innovation.

And while some regulation is likely to happen eventually, it appears it will be done piecemeal — one tentacle at a time. “From the House perspective, part of the challenge is that we’re under Republican control,” Democratic Rep. Ted Lieu of California, co-chair of the House task force on AI, tells Variety.

“It’s been very chaotic. We’re just trying to stop stupid stuff.” Hollywood unions and artists organizations argue that AI trains on artists’ work, and — if left unchecked — will churn out cheap imitations that will steal their jobs. They are lobbying for proposals to address copyright concerns and to outlaw nonconsensual AI “deepfakes,” which threaten performers.

Some advocates envision a licensing system like ASCAP or BMI, in which AI companies would pay royalties to train on copyrighted work, with artists having the ability to opt out. “Our interest is in making sure individual creators are being paid for the ingestion of their content into AI platforms, for which they are not currently remunerated despite the fact that their souls are being stolen,” says James Silverberg, CEO of the American Society for Collective Rights Licensing, which distributes royalties to illustrators and photographers. Some artists

.

Austin Butler Responds to Rumors of Starring in 'Pirates of the Caribbean' Reboot

Austin Butler is opening up about the possibility of Pirates of the Caribbean in his future.

![‘Made In England’ Review: Martin Scorsese Offers An Intimate Tour Through The Radical Romanticism Of Powell & Pressburger Cinema [Tribeca] - theplaylist.net - Britain - USA ‘Made In England’ Review: Martin Scorsese Offers An Intimate Tour Through The Radical Romanticism Of Powell & Pressburger Cinema [Tribeca] - theplaylist.net - Britain - USA](https://popstar.one/storage/thumbs_400/img/2024/6/10/1573367_iip.jpg)

‘Made In England’ Review: Martin Scorsese Offers An Intimate Tour Through The Radical Romanticism Of Powell & Pressburger Cinema [Tribeca]

True cinephilia lives outside the confines of your front door, way past the boundaries of your home and native language. So, for all the talk of Martin Scorsese as a preeminent master of American cinema, it’s always been heartening to know the filmmaker and cineaste has appreciated all aspects of international cinema, from the East to the West and beyond.

Austin Butler is Classically Handsome in Pinstriped Suit at 'The Bikeriders' Sydney Premiere

Austin Butler is keeping busy promoting his new movie The Bikeriders Down Under!

Austin Butler Poses with Motorcycle at 'The Bikeriders' Press Event in Sydney

Austin Butler has arrived Down Under!

Manchester Mayor Andy Burnham shares support for the £1 arena ticket levy to save grassroots venues: “Urgent action is needed”

full report into the state of the sector for 2023, showing the “disaster” facing live music with venues closing at a rate of around two per week. Presented at Westminster, the MVT echoed their calls for a levy on tickets on gigs at arena size and above and for major labels and such to pay back into the grassroots scene, arguing that “the big companies are now going to have to answer for this”.The Music Venue Trust has pushed for this system to be introduced as a way of safeguarding the UK’s talent pipeline, similar to how the Premier League funds local football clubs.

Barry Keoghan In Talks To Join Amazon MGM Studios Adaptation Of Don Winslow’s ‘Crime 101’

Barry Keoghan is in negotiations to join Chris Hemsworth and Mark Ruffalo in Amazon MGM Studios adaptation of Don Winslow’s novella Crime 101. He will co-star with Chris Hemsworth, who also is in talks to star and produce alongside producing partner Ben Grayson. The film will be released in theaters next year.

Austin Butler Looks Effortlessly Cool on the Back of a Motorcycle During 'Bikeriders' Event

Austin Butler is showing off his motorcycle-riding abilities while attending an event for his new movie The Bikeriders.

'I'm in!' - Jurgen Klopp makes Liverpool FC promise over Man City 115 charges

Jurgen Klopp said he would be willing to wait for a bus parade if Manchester City were stripped of Premier League titles for rule-breaking.

Jurgen Klopp aims subtle dig at Erik ten Hag over Jadon Sancho Manchester United fallout

Jurgen Klopp couldn't resist a dig at Erik ten Hag's handling of Jadon Sancho's Manchester United career as he took a swipe at the club's transfer dealings when speaking at a farewell event on Tuesday night.

Amy Winslow, Artist Manager Who Worked With Guided by Voices and Yoko Ono, Dies at 59

Jem Aswad Executive Editor, Music Amy Winslow, an artist manager, radio executive and 15-year veteran of the Manage This! firm who worked closely with Guided by Voices, Yoko Ono, Sean Ono Lennon, the Dirty Projectors, Laura Gibson, Surfer Blood, Kiwi Jr. and others, died on May 23 after a battle with breast cancer, the company confirms to Variety.

'I cannot say' - Arsenal chief breaks silence on Man City's 115 charges and agrees with Jurgen Klopp

Arsenal sporting director Edu has followed the lead of former Liverpool manager Jurgen Klopp in choosing to praise Pep Guardiola rather than comment on Manchester City's outstanding 115 charges from the Premier League.

Austin Butler & Jodie Comer Attend Star-Studded Indy 500 Race, Serve As Honorary Starters

Austin Butler and Jodie Comer pose for photos while stepping out for the 2024 Indianapolis 500 race held at Indianapolis Motor Speedway on Sunday (May 26) in Speedway, Ind.

SNP 'too full of young people who have just come through the political ranks', says Nicola Sturgeon

The SNP is "too full of young people who have just come through the political ranks", Nicola Sturgeon has said.

Jack Butland in defiant Rangers stance as he names what final Premiership table DOESN'T show

Jack Butland insists ‘defiant’ Rangers are out to salvage some pride when they take on Celtic at Hampden.

Inside Jurgen Klopp's post-Liverpool life of luxury with £3m holiday home that puts the Love Island villa to shame

After nearly nine years as the manager of Liverpool FC, Jurgen Klopp is getting set to say farewell to the team as he steps down from his role. During his time at the club, Jurgen has helped to revolutionise the team, with Liverpool lifting the UEFA Champions League, Premier League, FIFA Club World Cup and FA Cup under his watchful eye.

Jurgen Klopp's beautiful wife Ulla clear on what she thinks about Liverpool as he manages his last game

Jurgen Klopp's departure from Liverpool Football Club at the end of the season also marks the end of Ulla Sandrock's time in Merseyside.The German will manage his final game as the Reds boss against Wolves, ending a chapter of the greatest period of success Liverpool have had since the 1980s under previous managers Bob Paisley and Joe Fagan. Jurgen’s exit from Anfield after joining in 2015 is a heart-wrenching one for fans worldwide, as he'll soon return to his native Germany with wife Ulla Sandrock.The couple, who met at Oktoberfest in the German city of Munich, have been married since 2005 and have been active members of the community, with Ulla dedicating time, effort, and money to their adopted home.

Pep Guardiola agrees with Jurgen Klopp over Man City title myth

Pep Guardiola laughed off the idea that he is the main reason behind Manchester City's dominance, as rivals hope for his departure.

Mystery Jets’ Blaine Harrison has “huge respect” for artists in Great Escape boycott but “no shade on those that haven’t” as he shares advice

Mystery Jets’ Blaine Harrison has said he has “huge respect” for those boycotting The Great Escape, but insists there is “no shade on those who haven’t”.The 2024 edition of the event – which showcases new and rising artists – is currently taking place across various music venues in Brighton until Saturday (May 18).The Great Escape is sponsored by Barclays, which has been a source of controversy amid the ongoing conflict in Gaza because of the bank’s financial investment in companies that supply arms to Israel.Harrison has weighed in on the topic now, writing in a lengthy social media post: “Huge respect for the 100+ artists who have pulled out of The Great Escape in Brighton this weekend on the grounds of its sponsorship by Barclaycard, who are actively supporting the genocide and horrific war crimes in Gaza by investing in companies supplying arms to Israel.”As The Great Escape kicks off in Brighton today, huge respect for the 100+ artists who have pulled out due to the sponsorship by barclaycard. But no shade on those that haven’t.

Ilana Glazer & Michelle Buteau Celebrate Their Movie 'Babes' at NYC Red Carpet Premiere!

Ilana Glazer and Michelle Buteau‘s new movie Babes is hitting theaters this weekend!